GeneralLinearModelAlgorithm¶

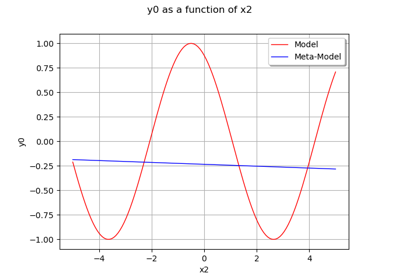

(Source code, png)

- class GeneralLinearModelAlgorithm(*args)¶

Algorithm for the evaluation of general linear models.

- Available constructors:

GeneralLinearModelAlgorithm(inputSample, outputSample, covarianceModel, keepCovariance=True)

GeneralLinearModelAlgorithm(inputSample, outputSample, covarianceModel, basis, keepCovariance=True)

- Parameters:

- inputSample, outputSample2-d sequence of float

The samples

and

.

- covarianceModel

CovarianceModel Covariance model of the Gaussian process. See notes for the details.

- basis

Basis Functional basis to estimate the trend:

. If

, the same basis is used for each marginal output.

- keepCovariancebool, optional

Indicates whether the covariance matrix has to be stored in the result structure GeneralLinearModelResult. Default value is set in resource map key GeneralLinearModelAlgorithm-KeepCovariance

Methods

BuildDistribution(inputSample)Recover the distribution, with metamodel performance in mind.

Accessor to the object's name.

Accessor to the joint probability density function of the physical input vector.

Accessor to the input sample.

getName()Accessor to the object's name.

getNoise()Observation noise variance accessor.

Accessor to the log-likelihood function that writes as argument of the covariance's model parameters.

Accessor to solver used to optimize the covariance model parameters.

Optimization bounds accessor.

Accessor to the covariance model parameters optimization flag.

Accessor to the output sample.

Get the results of the metamodel computation.

Return the weights of the input sample.

hasName()Test if the object is named.

run()Compute the response surface.

setDistribution(distribution)Accessor to the joint probability density function of the physical input vector.

setName(name)Accessor to the object's name.

setNoise(noise)Observation noise variance accessor.

setOptimizationAlgorithm(solver)Accessor to the solver used to optimize the covariance model parameters.

setOptimizationBounds(optimizationBounds)Optimization bounds accessor.

setOptimizeParameters(optimizeParameters)Accessor to the covariance model parameters optimization flag.

Notes

We suppose we have a sample

where

is the sample size,

for all

and

is the model. The objective is to build a metamodel

, using a general linear model: the sample

is considered as the restriction of a Gaussian process

on

. The Gaussian process

is defined by:

where:

with

for any

, where

is the number trend functions of the

-th output component, and

the trend functions. The Gaussian process

is of dimension

with zero mean and covariance function

(see Stochastic process definitions for the notations).

We note:

where

is the total number of trend functions for all output dimensions.

The GeneralLinearModelAlgorithm class estimates the coefficients

and

where

is the vector of parameters of the covariance model, i.e. a subset of

, that has been declared as active (by default, the full vectors

and

). The estimation is done by maximizing the reduced log-likelihood of the model (see the equation (1) below).

Estimation of the parameters

and

We note:

and

where

. The model likelihood writes:

Let

be the Cholesky factor of

, i.e. the lower triangular matrix with positive diagonal such that

. Therefore the log-likelihood is:

(1)¶

where

is a constant independent of

and

. The maximization of (1) leads to the following optimality condition for

:

This expression of

as a function of

is taken as a general relation between

and

and is substituted into (1), leading to a reduced log-likelihood function depending solely on

.

In the particular case where

and

is a part of

, then a further reduction is possible. In this case, let

be the vector

in which

has been substituted by 1. Therefore:

This shows that

is a function of

only, and the optimality condition for

reads:

This leads to a further reduction of the log-likelihood function where both

and

are replaced by their expression in terms of

.

The default optimizer is

TNCand can be changed thanks to the setOptimizationAlgorithm method. User could also change the default optimization solver by setting the GeneralLinearModelAlgorithm-DefaultOptimizationAlgorithm resource map key to one of theNLoptsolver names.It is also possible to proceed as follows:

ask for the reduced log-likelihood function of the GeneralLinearModelAlgorithm thanks to the getObjectiveFunction() method

optimize it with respect to the parameters

and

using any optimization algorithms (that can take into account some additional constraints if needed)

set the optimal parameter value into the covariance model used in the GeneralLinearModelAlgorithm

tell the algorithm not to optimize the parameter using setOptimizeParameters

The behaviour of the reduction is controlled by the following keys in

ResourceMap:ResourceMap.SetAsBool(‘GeneralLinearModelAlgorithm-UseAnalyticalAmplitudeEstimate’, True) to use the reduction associated to

. It has no effect if

or if

and

is not part of

ResourceMap.SetAsBool(‘GeneralLinearModelAlgorithm-UnbiasedVariance’, True) allows one to use the unbiased estimate of

where

is replaced by

in the optimality condition for

, where

is the dimension of the vector

.

With huge samples, the hierarchical matrix implementation could be used if OpenTURNS had been compiled with hmat-oss support.

This implementation, which is based on a compressed representation of an approximated covariance matrix (and its Cholesky factor), has a better complexity both in terms of memory requirements and floating point operations. To use it, the GeneralLinearModelAlgorithm-LinearAlgebra resource map key should be set to HMAT. Default value of the key is LAPACK.

A known centered gaussian observation noise

can be taken into account with

setNoise():Examples

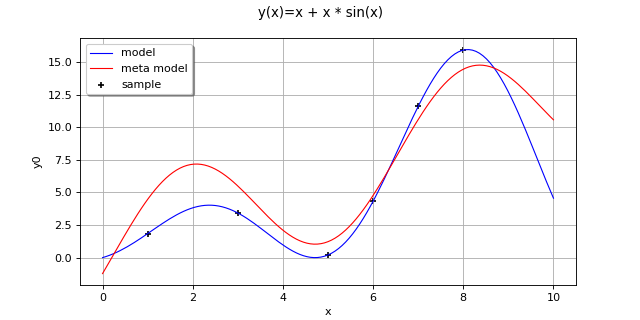

Create the model

and the samples:

>>> import openturns as ot >>> f = ot.SymbolicFunction(['x'], ['x+x * sin(x)']) >>> inputSample = ot.Sample([[1.0], [3.0], [5.0], [6.0], [7.0], [8.0]]) >>> outputSample = f(inputSample)

Create the algorithm:

>>> f1 = ot.SymbolicFunction(['x'], ['sin(x)']) >>> f2 = ot.SymbolicFunction(['x'], ['x']) >>> f3 = ot.SymbolicFunction(['x'], ['cos(x)']) >>> basis = ot.Basis([f1,f2, f3]) >>> covarianceModel = ot.SquaredExponential([1.0]) >>> covarianceModel.setActiveParameter([]) >>> algo = ot.GeneralLinearModelAlgorithm(inputSample, outputSample, covarianceModel, basis) >>> algo.run()

Get the resulting meta model:

>>> result = algo.getResult() >>> metamodel = result.getMetaModel()

- __init__(*args)¶

- static BuildDistribution(inputSample)¶

Recover the distribution, with metamodel performance in mind.

For each marginal, find the best 1-d continuous parametric model else fallback to the use of a nonparametric one.

The selection is done as follow:

We start with a list of all parametric models (all factories)

For each model, we estimate its parameters if feasible.

We check then if model is valid, ie if its Kolmogorov score exceeds a threshold fixed in the MetaModelAlgorithm-PValueThreshold ResourceMap key. Default value is 5%

We sort all valid models and return the one with the optimal criterion.

For the last step, the criterion might be BIC, AIC or AICC. The specification of the criterion is done through the MetaModelAlgorithm-ModelSelectionCriterion ResourceMap key. Default value is fixed to BIC. Note that if there is no valid candidate, we estimate a non-parametric model (

KernelSmoothingorHistogram). The MetaModelAlgorithm-NonParametricModel ResourceMap key allows selecting the preferred one. Default value is HistogramOne each marginal is estimated, we use the Spearman independence test on each component pair to decide whether an independent copula. In case of non independence, we rely on a

NormalCopula.- Parameters:

- sample

Sample Input sample.

- sample

- Returns:

- distribution

Distribution Input distribution.

- distribution

- getClassName()¶

Accessor to the object’s name.

- Returns:

- class_namestr

The object class name (object.__class__.__name__).

- getDistribution()¶

Accessor to the joint probability density function of the physical input vector.

- Returns:

- distribution

Distribution Joint probability density function of the physical input vector.

- distribution

- getInputSample()¶

Accessor to the input sample.

- Returns:

- inputSample

Sample Input sample of a model evaluated apart.

- inputSample

- getName()¶

Accessor to the object’s name.

- Returns:

- namestr

The name of the object.

- getNoise()¶

Observation noise variance accessor.

- Parameters:

- noisesequence of positive float

The noise variance

of each output value.

- getObjectiveFunction()¶

Accessor to the log-likelihood function that writes as argument of the covariance’s model parameters.

Notes

The log-likelihood function may be useful for some postprocessing: maximization using external optimizers for example.

Examples

Create the model

and the samples:

>>> import openturns as ot >>> f = ot.SymbolicFunction(['x0'], ['x0 * sin(x0)']) >>> inputSample = ot.Sample([[1.0], [3.0], [5.0], [6.0], [7.0], [8.0]]) >>> outputSample = f(inputSample)

Create the algorithm:

>>> basis = ot.ConstantBasisFactory().build() >>> covarianceModel = ot.SquaredExponential(1) >>> algo = ot.GeneralLinearModelAlgorithm(inputSample, outputSample, covarianceModel, basis) >>> algo.run()

Get the log-likelihood function:

>>> likelihoodFunction = algo.getObjectiveFunction()

- getOptimizationAlgorithm()¶

Accessor to solver used to optimize the covariance model parameters.

- Returns:

- algorithm

OptimizationAlgorithm Solver used to optimize the covariance model parameters. Default optimizer is

TNC

- algorithm

- getOptimizationBounds()¶

Optimization bounds accessor.

- Returns:

- bounds

Interval Bounds for covariance model parameter optimization.

- bounds

- getOptimizeParameters()¶

Accessor to the covariance model parameters optimization flag.

- Returns:

- optimizeParametersbool

Whether to optimize the covariance model parameters.

- getOutputSample()¶

Accessor to the output sample.

- Returns:

- outputSample

Sample Output sample of a model evaluated apart.

- outputSample

- getResult()¶

Get the results of the metamodel computation.

- Returns:

- result

GeneralLinearModelResult Structure containing all the results obtained after computation and created by the method

run().

- result

- getWeights()¶

Return the weights of the input sample.

- Returns:

- weightssequence of float

The weights of the points in the input sample.

- hasName()¶

Test if the object is named.

- Returns:

- hasNamebool

True if the name is not empty.

- run()¶

Compute the response surface.

Notes

It computes the response surface and creates a

GeneralLinearModelResultstructure containing all the results.

- setDistribution(distribution)¶

Accessor to the joint probability density function of the physical input vector.

- Parameters:

- distribution

Distribution Joint probability density function of the physical input vector.

- distribution

- setName(name)¶

Accessor to the object’s name.

- Parameters:

- namestr

The name of the object.

- setNoise(noise)¶

Observation noise variance accessor.

- Parameters:

- noisesequence of positive float

The noise variance

of each output value.

- setOptimizationAlgorithm(solver)¶

Accessor to the solver used to optimize the covariance model parameters.

- Parameters:

- algorithm

OptimizationAlgorithm Solver used to optimize the covariance model parameters.

- algorithm

- setOptimizationBounds(optimizationBounds)¶

Optimization bounds accessor.

- Parameters:

- bounds

Interval Bounds for covariance model parameter optimization.

- bounds

Notes

Parameters involved by this method are:

Scale parameters,

Amplitude parameters if output dimension is greater than one or analytical sigma disabled,

Additional parameters.

Lower & upper bounds are defined in resource map. Default lower upper bounds value for all parameters is

and defined thanks to the GeneralLinearModelAlgorithm-DefaultOptimizationLowerBound resource map key.

For scale parameters, default upper bounds are set as

times the difference between the max and min values of X for each coordinate, X being the (transformed) input sample. The value

is defined in resource map (GeneralLinearModelAlgorithm-DefaultOptimizationScaleFactor).

Finally for other parameters (amplitude,…), default upper bound is set to

(corresponding resource map key is GeneralLinearModelAlgorithm-DefaultOptimizationUpperBound)

- setOptimizeParameters(optimizeParameters)¶

Accessor to the covariance model parameters optimization flag.

- Parameters:

- optimizeParametersbool

Whether to optimize the covariance model parameters.

OpenTURNS

OpenTURNS