Pagmo¶

- class Pagmo(*args)¶

Pagmo algorithms.

This class exposes bio-inspired and evolutionary global optimization algorithms from the Pagmo library. These algorithms start from an initial population and make it evolve to obtain a final population after a defined number of generations (by

setMaximumIterationNumber()). A few of these algorithms allow for multi-objective optimization, and in that case the result is not the best point among the final population but a set of dominant points: a pareto front.- Parameters:

- problem

OptimizationProblem Optimization problem to solve

- algoNamestr, default=’gaco’

Identifier of the optimization method to use.

- startingSample2-d sequence of float, optional

Initial population

- problem

Notes

The total number of evaluations is the size of the initial population multiplied by the iteration number plus one. Starting points provided through the startingSample parameter should be within the bounds of the

OptimizationProblem, but this is not enforced.Pagmo provides the following global heuristics:

Algorithm

Description

Multi-objective

MINLP

Batch

gaco

Extended Ant Colony Optimization

no

yes

yes

de

Differential Evolution

no

no

no

sade

Self-adaptive DE (jDE and iDE)

no

no

no

de1220

Self-adaptive DE (de_1220 aka pDE)

no

no

no

gwo

Grey wolf optimizer

no

no

no

ihs

Improved Harmony Search

no

yes

no

pso

Particle Swarm Optimization

no

no

no

pso_gen

Particle Swarm Optimization Generational

no

no

yes

sea

(N+1)-ES Simple Evolutionary Algorithm

no

no

no

sga

Simple Genetic Algorithm

no

yes

no

simulated_annealing

Corana’s Simulated Annealing

no

no

no

bee_colony

Artificial Bee Colony

no

no

no

cmaes

Covariance Matrix Adaptation Evo. Strategy

no

no

yes

xnes

Exponential Evolution Strategies

no

no

no

nsga2

Non-dominated Sorting GA

yes

yes

yes

moead

Multi-objective EA with Decomposition

yes

no

no

moead_gen

Multi-objective EA with Decomposition Gen.

yes

no

yes

mhaco

Multi-objective Hypervolume-based ACO

yes

yes

yes

nspso

Non-dominated Sorting PSO

yes

no

yes

Only gaco and ihs natively support constraints, but for the other algorithms constraints are emulated through penalization. For mhaco, the initial population must satisfy constraints, else it is built by boostrap on valid points with the same population size as the one provided. Some algorithms support batch evaluation, see

setBlockSize(). Default parameters are available in theResourceMapfor each algorithm, refer to the correspondings keys in the Pagmo documentation.Examples

Define an optimization problem to find the minimum of the Rosenbrock function:

>>> import openturns as ot >>> dim = 2 >>> rosenbrock = ot.SymbolicFunction(['x1', 'x2'], ['(1-x1)^2+100*(x2-x1^2)^2']) >>> bounds = ot.Interval([-5.0] * dim, [5.0] * dim) >>> problem = ot.OptimizationProblem(rosenbrock) >>> problem.setBounds(bounds)

Sample the initial population inside a box:

>>> uniform = ot.JointDistribution([ot.Uniform(-2.0, 2.0)] * dim) >>> ot.RandomGenerator.SetSeed(0) >>> init_pop = uniform.getSample(5)

Run GACO on our problem:

>>> algo = ot.Pagmo(problem, 'gaco', init_pop) >>> algo.setMaximumIterationNumber(5) >>> algo.run() >>> result = algo.getResult() >>> x_star = result.getOptimalPoint() >>> y_star = result.getOptimalValue()

Get the final population:

>>> final_pop_x = result.getFinalPoints() >>> final_pop_y = result.getFinalValues()

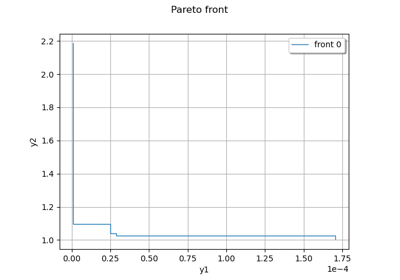

Define a multi-objective problem:

>>> dim = 2 >>> model = ot.SymbolicFunction(['x', 'y'], ['x^2+y^2*(1-x)^3', '-x^2']) >>> bounds = ot.Interval([-2.0] * dim, [3.0] * dim) >>> problem = ot.OptimizationProblem(model) >>> problem.setBounds(bounds)

Sample the initial population inside a box:

>>> uniform = ot.JointDistribution([ot.Uniform(-2.0, 3.0)] * dim) >>> ot.RandomGenerator.SetSeed(0) >>> init_pop = uniform.getSample(5)

Run NSGA2 on our problem:

>>> algo = ot.Pagmo(problem, 'nsga2', init_pop) >>> algo.setMaximumIterationNumber(5) >>> algo.run() >>> result = algo.getResult() >>> final_pop_x = result.getFinalPoints() >>> final_pop_y = result.getFinalValues()

Get the best front points and values:

>>> front0 = result.getParetoFrontsIndices()[0] >>> front0_x = final_pop_x.select(front0) >>> front0_y = final_pop_y.select(front0)

Methods

Accessor to the list of algorithm names provided.

Accessor to the algorithm name.

Block size accessor.

Accessor to check status flag.

Accessor to the object's name.

Accessor to maximum allowed absolute error.

Accessor to maximum allowed number of calls.

Accessor to maximum allowed constraint error.

Accessor to maximum allowed number of iterations.

Accessor to maximum allowed relative error.

Accessor to maximum allowed residual error.

Accessor to the maximum duration.

getName()Accessor to the object's name.

Accessor to optimization problem.

Accessor to optimization result.

getSeed()Random generator seed accessor.

Accessor to starting point.

Accessor to the sample of starting points.

hasName()Test if the object is named.

run()Launch the optimization.

setAlgorithmName(algoName)Accessor to the algorithm name.

setBlockSize(blockSize)Block size accessor.

setCheckStatus(checkStatus)Accessor to check status flag.

setMaximumAbsoluteError(maximumAbsoluteError)Accessor to maximum allowed absolute error.

setMaximumCallsNumber(maximumCallsNumber)Accessor to maximum allowed number of calls

setMaximumConstraintError(maximumConstraintError)Accessor to maximum allowed constraint error.

setMaximumIterationNumber(maximumIterationNumber)Accessor to maximum allowed number of iterations.

setMaximumRelativeError(maximumRelativeError)Accessor to maximum allowed relative error.

setMaximumResidualError(maximumResidualError)Accessor to maximum allowed residual error.

setMaximumTimeDuration(maximumTime)Accessor to the maximum duration.

setName(name)Accessor to the object's name.

setProblem(problem)Accessor to optimization problem.

setProgressCallback(*args)Set up a progress callback.

setResult(result)Accessor to optimization result.

setSeed(seed)Random generator seed accessor.

setStartingPoint(point)Accessor to starting point.

setStartingSample(startingSample)Accessor to the sample of starting points.

setStopCallback(*args)Set up a stop callback.

getMaximumEvaluationNumber

setMaximumEvaluationNumber

- __init__(*args)¶

- static GetAlgorithmNames()¶

Accessor to the list of algorithm names provided.

- Returns:

- names

Description List of algorithm names provided, according to its naming convention.

- names

- getAlgorithmName()¶

Accessor to the algorithm name.

- Returns:

- algoNamestr

The identifier of the algorithm.

- getBlockSize()¶

Block size accessor.

- Returns:

- blockSizeint

Batch evaluation granularity.

- getCheckStatus()¶

Accessor to check status flag.

- Returns:

- checkStatusbool

Whether to check the termination status. If set to False,

run()will not throw an exception if the algorithm does not fully converge and will allow one to still find a feasible candidate.

- getClassName()¶

Accessor to the object’s name.

- Returns:

- class_namestr

The object class name (object.__class__.__name__).

- getMaximumAbsoluteError()¶

Accessor to maximum allowed absolute error.

- Returns:

- maximumAbsoluteErrorfloat

Maximum allowed absolute error, where the absolute error is defined by

where

and

are two consecutive approximations of the optimum.

- getMaximumCallsNumber()¶

Accessor to maximum allowed number of calls.

- Returns:

- maximumEvaluationNumberint

Maximum allowed number of direct objective function calls through the () operator. Does not take into account eventual indirect calls through finite difference gradient calls.

- getMaximumConstraintError()¶

Accessor to maximum allowed constraint error.

- Returns:

- maximumConstraintErrorfloat

Maximum allowed constraint error, where the constraint error is defined by

where

is the current approximation of the optimum and

is the function that gathers all the equality and inequality constraints (violated values only)

- getMaximumIterationNumber()¶

Accessor to maximum allowed number of iterations.

- Returns:

- maximumIterationNumberint

Maximum allowed number of iterations.

- getMaximumRelativeError()¶

Accessor to maximum allowed relative error.

- Returns:

- maximumRelativeErrorfloat

Maximum allowed relative error, where the relative error is defined by

if

, else

.

- getMaximumResidualError()¶

Accessor to maximum allowed residual error.

- Returns:

- maximumResidualErrorfloat

Maximum allowed residual error, where the residual error is defined by

if

, else

.

- getMaximumTimeDuration()¶

Accessor to the maximum duration.

- Returns:

- maximumTimefloat

Maximum optimization duration in seconds.

- getName()¶

Accessor to the object’s name.

- Returns:

- namestr

The name of the object.

- getProblem()¶

Accessor to optimization problem.

- Returns:

- problem

OptimizationProblem Optimization problem.

- problem

- getResult()¶

Accessor to optimization result.

- Returns:

- result

OptimizationResult Result class.

- result

- getSeed()¶

Random generator seed accessor.

- Returns:

- seedint

Seed.

- getStartingSample()¶

Accessor to the sample of starting points.

- Returns:

- startingSample

Sample The initial population.

- startingSample

- hasName()¶

Test if the object is named.

- Returns:

- hasNamebool

True if the name is not empty.

- run()¶

Launch the optimization.

- setAlgorithmName(algoName)¶

Accessor to the algorithm name.

- Parameters:

- algoNamestr

The identifier of the algorithm.

- setBlockSize(blockSize)¶

Block size accessor.

- Parameters:

- blockSizeint

Batch evaluation granularity.

- setCheckStatus(checkStatus)¶

Accessor to check status flag.

- Parameters:

- checkStatusbool

Whether to check the termination status. If set to False,

run()will not throw an exception if the algorithm does not fully converge and will allow one to still find a feasible candidate.

- setMaximumAbsoluteError(maximumAbsoluteError)¶

Accessor to maximum allowed absolute error.

- Parameters:

- maximumAbsoluteErrorfloat

Maximum allowed absolute error, where the absolute error is defined by

where

and

are two consecutive approximations of the optimum.

- setMaximumCallsNumber(maximumCallsNumber)¶

Accessor to maximum allowed number of calls

- Parameters:

- maximumEvaluationNumberint

Maximum allowed number of direct objective function calls through the () operator. Does not take into account eventual indirect calls through finite difference gradient calls.

- setMaximumConstraintError(maximumConstraintError)¶

Accessor to maximum allowed constraint error.

- Parameters:

- maximumConstraintErrorfloat

Maximum allowed constraint error, where the constraint error is defined by

where

is the current approximation of the optimum and

is the function that gathers all the equality and inequality constraints (violated values only)

- setMaximumIterationNumber(maximumIterationNumber)¶

Accessor to maximum allowed number of iterations.

- Parameters:

- maximumIterationNumberint

Maximum allowed number of iterations.

- setMaximumRelativeError(maximumRelativeError)¶

Accessor to maximum allowed relative error.

- Parameters:

- maximumRelativeErrorfloat

Maximum allowed relative error, where the relative error is defined by

if

, else

.

- setMaximumResidualError(maximumResidualError)¶

Accessor to maximum allowed residual error.

- Parameters:

- maximumResidualErrorfloat

Maximum allowed residual error, where the residual error is defined by

if

, else

.

- setMaximumTimeDuration(maximumTime)¶

Accessor to the maximum duration.

- Parameters:

- maximumTimefloat

Maximum optimization duration in seconds.

- setName(name)¶

Accessor to the object’s name.

- Parameters:

- namestr

The name of the object.

- setProblem(problem)¶

Accessor to optimization problem.

- Parameters:

- problem

OptimizationProblem Optimization problem.

- problem

- setProgressCallback(*args)¶

Set up a progress callback.

Can be used to programmatically report the progress of an optimization.

- Parameters:

- callbackcallable

Takes a float as argument as percentage of progress.

Examples

>>> import sys >>> import openturns as ot >>> rosenbrock = ot.SymbolicFunction(['x1', 'x2'], ['(1-x1)^2+100*(x2-x1^2)^2']) >>> problem = ot.OptimizationProblem(rosenbrock) >>> solver = ot.OptimizationAlgorithm(problem) >>> solver.setStartingPoint([0, 0]) >>> solver.setMaximumResidualError(1.e-3) >>> solver.setMaximumCallsNumber(10000) >>> def report_progress(progress): ... sys.stderr.write('-- progress=' + str(progress) + '%\n') >>> solver.setProgressCallback(report_progress) >>> solver.run()

- setResult(result)¶

Accessor to optimization result.

- Parameters:

- result

OptimizationResult Result class.

- result

- setSeed(seed)¶

Random generator seed accessor.

- Parameters:

- seedint

Seed.

Notes

The default is set by the Pagmo-InitialSeed ResourceMap entry.

- setStartingSample(startingSample)¶

Accessor to the sample of starting points.

- Parameters:

- startingSample2-d sequence of float

The initial population.

- setStopCallback(*args)¶

Set up a stop callback.

Can be used to programmatically stop an optimization.

- Parameters:

- callbackcallable

Returns an int deciding whether to stop or continue.

Examples

>>> import openturns as ot >>> rosenbrock = ot.SymbolicFunction(['x1', 'x2'], ['(1-x1)^2+100*(x2-x1^2)^2']) >>> problem = ot.OptimizationProblem(rosenbrock) >>> solver = ot.OptimizationAlgorithm(problem) >>> solver.setStartingPoint([0, 0]) >>> solver.setMaximumResidualError(1.e-3) >>> solver.setMaximumCallsNumber(10000) >>> def ask_stop(): ... return True >>> solver.setStopCallback(ask_stop) >>> solver.run()

OpenTURNS

OpenTURNS